We published a new paper “Anycast in Context: A Tale of Two Systems” by Thomas Koch, Ke Li, Calvin Ardi*, Ethan Katz-Bassett, Matt Calder**, and John Heidemann* (of Columbia, where not otherwise indicated, *USC/ISI, and **Microsoft and Columbia) at ACM SIGCOMM 2021.

From the abstract:

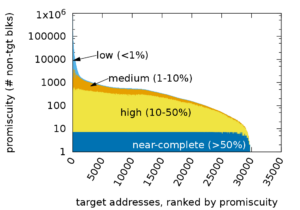

Anycast is used to serve content including web pages and DNS, and anycast deployments are growing. However, prior work examining root DNS suggests anycast deployments incur significant inflation, with users often routed to suboptimal sites. We reassess anycast performance, first extending prior analysis on inflation in the root DNS. We show that inflation is very common in root DNS, affecting more than 95% of users. However, we then show root DNS latency hardly matters to users because caching is so effective. These findings lead us to question: is inflation inherent to anycast, or can inflation be limited when it matters? To answer this question, we consider Microsoft’s anycast CDN serving latency-sensitive content. Here, latency matters orders of magnitude more than for root DNS. Perhaps because of this need, only 35% of CDN users experience any inflation, and the amount they experience is smaller than for root DNS. We show that CDN anycast latency has little inflation due to extensive peering and engineering. These results suggest prior claims of anycast inefficiency reflect experiments on a single application rather than anycast’s technical potential, and they demonstrate the importance of context when measuring system performance.

Tom also blogged about this work at APNIC.

![Comparing actual (obtained) anycast latency against optimal possible anycast latency, for 4 different anycast deployments (each a Root Letter). From the talk [Heidemann16b], based on data from [Moura16b].](https://ant.isi.edu/blog/wp-content/uploads/2016/10/Heidemann16b_icon-300x212.png)

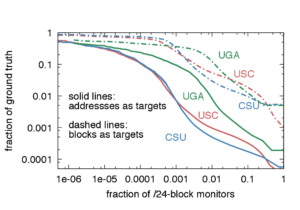

![[Schmidt16a] figure 4: distribution of measured latency (solid lines) to optimal possible latency (dashed lines) for 4 Root DNS anycast deployments.](https://ant.isi.edu/blog/wp-content/uploads/2016/05/plot-cdf-optimal-300x210.png)