The paper “Measuring DANE TLSA Deployment” will appear at the Traffic Monitoring and Analysis Workshop in April 2015 in Barcelona, Spain (previously available at http://www.isi.edu/~liangzhu/papers/dane_tlsa.pdf).

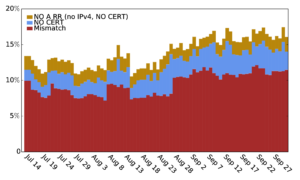

The DANE (DNS-based Authentication of Named Entities) framework uses DNSSEC to provide a source of trust, and with TLSA it can serve as a root of trust for TLS certificates. This serves to complement traditional certificate authentication methods, which is important given the risks inherent in trusting hundreds of organizations—risks already demonstrated with multiple compromises. The TLSA protocol was published in 2012, and this paper presents the first systematic study of its deployment. We studied TLSA usage, developing a tool that actively probes all signed zones in .com and .net for TLSA records. We find the TLSA use is early: in our latest measurement, of the 485k signed zones, we find only 997 TLSA names. We characterize how it is being used so far, and find that around 7–13% of TLSA records are invalid. We find 33% of TLSA responses are larger than 1500 Bytes and will very likely be fragmented.

The work in the paper is by Liang Zhu (USC/ISI), Duane Wessels and Allison Mankin (both of Verisign Labs), and John Heidemann (USC/ISI).

![Predicting longitude from observed diurnal phase ([Quan14c], figure 14c)](http://ant.isi.edu/blog/wp-content/uploads/2014/10/Quan14c_icon-300x175.png)

![Comparing observed diurnal phase and geolocation longitude for 287k geolocatable, diurnal blocks ([Quan14b], figure 14b)](http://ant.isi.edu/blog/wp-content/uploads/2014/05/Quan14b_icon-300x210.png)