We are happy to announce a new project, LACANIC, the Los Angeles/Colorado Application and Network Information Community.

The LACANIC project’s goal is to develop datasets to improve Internet security and readability. We distribute these datasets through the DHS IMPACT program.

As part of this work we:

- provide regular data collection to collect long-term, longitudinal data

- curate datasets for special events

- build websites and portals to help make data accessible to casual users

- develop new measurement approaches

We provide several types of datasets:

- anonymized packet headers and network flow data, often to document events like distributed denial-of-service (DDoS) attacks and regular traffic

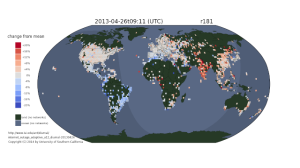

- Internet censuses and surveys for IPv4 to document address usage

- Internet hitlists and histories, derived from IPv4 censuses, to support other topology studies

- application data, like DNS and Internet-of-Things mapping, to document regular traffic and DDoS events

- and we are developing other datasets

LACANIC allows us to continue some of the data collection we were doing as part of the LACREND project, as well as develop new methods and ways of sharing the data.

LACANIC is a joint effort of the ANT Lab involving USC/ISI (PI: John Heidemann) and Colorado State University (PI: Christos Papadopoulos).

We thank DHS’s Cyber Security Division for their continued support!

![Predicting longitude from observed diurnal phase ([Quan14c], figure 14c)](http://ant.isi.edu/blog/wp-content/uploads/2014/10/Quan14c_icon-300x175.png)

![Comparing observed diurnal phase and geolocation longitude for 287k geolocatable, diurnal blocks ([Quan14b], figure 14b)](http://ant.isi.edu/blog/wp-content/uploads/2014/05/Quan14b_icon-300x210.png)