The paper “Does Anycast hang up on you?” will appear in the 2017 Conference on Network Traffic Measurement and Analysis (TMA) July 21-23, 2017 in Dublin, Ireland.

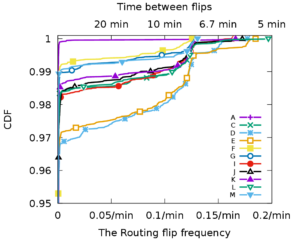

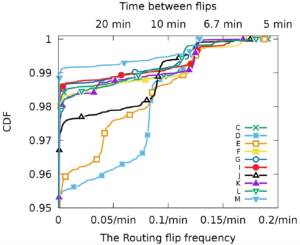

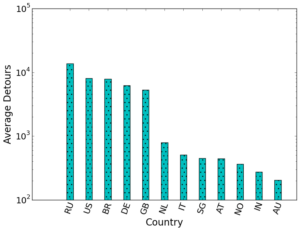

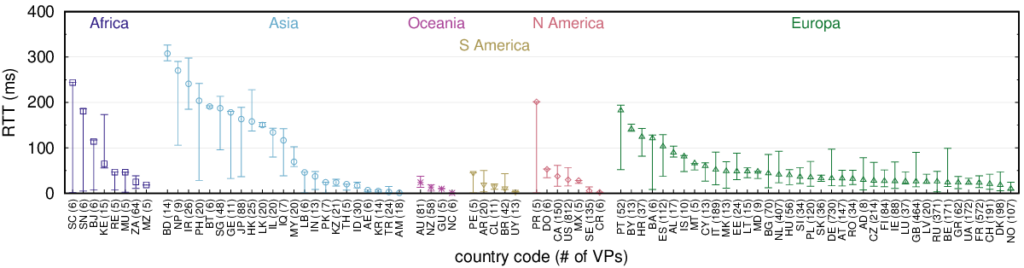

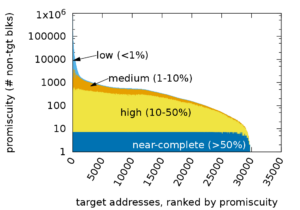

Anycast-based services today are widely used commercially, with several major providers serving thousands of important websites. However, to our knowledge, there has been only limited study of how often anycast fails because routing changes interrupt connections between users and their current anycast site. While the commercial success of anycast CDNs means anycast usually work well, do some users end up shut out of anycast? In this paper we examine data from more than 9000 geographically distributed vantage points (VPs) to 11 anycast services to evaluate this question. Our contribution is the analysis of this data to provide the first quantification of this problem, and to explore where and why it occurs. We see that about 1\% of VPs are anycast unstable, reaching a different anycast site frequently (sometimes every query). Flips back and forth between two sites in 10 seconds are observed in selected experiments for given service and VPs. Moreover, we show that anycast instability is persistent for some VPs—a few VPs never see a stable connections to certain anycast services during a week or even longer. The vast majority of VPs only saw unstable routing towards one or two services instead of instability with all services, suggesting the cause of the instability lies somewhere in the path to the anycast sites. Finally, we point out that for highly-unstable VPs, their probability to hit a given site is constant, which means the flipping are happening at a fine granularity—per packet level, suggesting load balancing might be the cause to anycast routing flipping. Our findings confirm the common wisdom that anycast almost always works well, but provide evidence that a small number of locations in the Internet where specific anycast services are never stable.

This paper is joint work of Lan Wei and John Heidemann. A pre-print of paper is at http://ant.isi.edu/~johnh/PAPERS/Wei17b.pdf, and the datasets from the paper are at https://ant.isi.edu/datasets/anycast/index.html#stability.

![[Moura16a] Figure 3](https://ant.isi.edu/blog/wp-content/uploads/2016/05/plot-letter-reachability-mp-scaledA-280x300.png)