The paper “When the Internet Sleeps: Correlating Diurnal Networks With External Factors” will appear at ACM Internet Measurements Conference 2014 in Vancouver, Canada (available at http://www.isi.edu/~johnh/PAPERS/Quan14c/ with cite and pdf, or direct pdf).

![Predicting longitude from observed diurnal phase ([Quan14c], figure 14c)](http://ant.isi.edu/blog/wp-content/uploads/2014/10/Quan14c_icon-300x175.png)

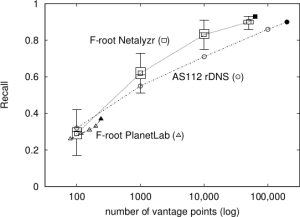

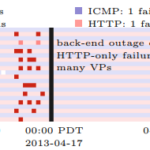

As the Internet matures, policy questions loom larger in its operation. When should an ISP, city, or government invest in infrastructure? How do their policies affect use? In this work, we develop a new approach to evaluate how policies, economic conditions and technology correlates with Internet use around the world. First, we develop an adaptive and accurate approach to estimate block availability, the fraction of active IP addresses in each /24 block over short timescales (every 11 minutes). Our estimator provides a new lens to interpret data taken from existing long-term outage measurements, thus requiring no additional traffic. (If new collection was required, it would be lightweight, since on average, outage detection requires less than 20 probes per hour per /24 block; less than 1% of background radiation.) Second, we show that spectral analysis of this measure can identify diurnal usage: blocks where addresses are regularly used during part of the day and idle in other times. Finally, we analyze data for the entire responsive Internet (3.7M /24 blocks) over 35 days. These global observations show when and where the Internet sleeps—networks are mostly always-on in the US and Western Europe, and diurnal in much of Asia, South America, and Eastern Europe. ANOVA (Analysis of Variance) testing shows that diurnal networks correlate negatively with country GDP and electrical consumption, quantifying that national policies and economics relate to networks.

Citation: Lin Quan, John Heidemann, and Yuri Pradkin. When the Internet Sleeps: Correlating Diurnal Networks With External Factors. In Proceedings of the ACM Internet Measurement Conference, p. to appear. Vancouver, BC, Canada, ACM. November, 2014.

All data in this paper is available to researchers at no cost, and source code to our analysis tools is available on request; see our diurnal datasets webpage.

This work is partly supported by DHS S&T, Cyber Security division, agreement FA8750-12-2-0344 (under AFRL) and N66001-13-C-3001 (under SPAWAR). The views contained

herein are those of the authors and do not necessarily represent those of DHS or the U.S. Government. This work was classified by USC’s IRB as non-human subjects research (IIR00001648).

![[Calder13a] figure 5a](http://ant.isi.edu/blog/wp-content/uploads/2013/10/Calder13a_icon-300x156.png)