We have released a new technical report: “Having your Privacy Cake and Eating it Too: Platform-supported Auditing of Social Media Algorithms for Public Interest”, available at https://arxiv.org/abs/2207.08773.

From the abstract:

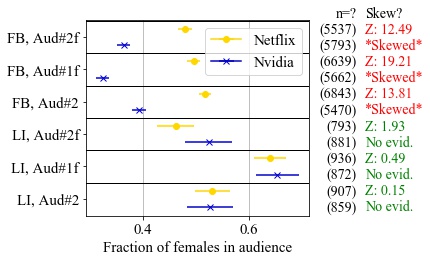

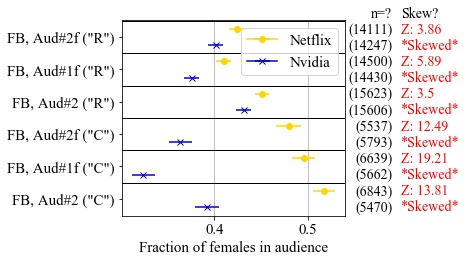

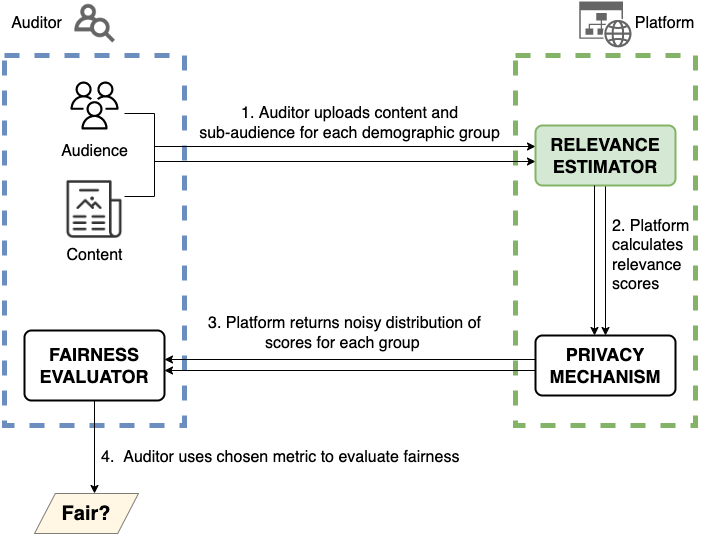

Legislations have been proposed in both the U.S. and the E.U. that mandate auditing of social media algorithms by external researchers. But auditing at scale risks disclosure of users’ private data and platforms’ proprietary algorithms, and thus far there has been no concrete technical proposal that can provide such auditing. Our goal is to propose a new method for platform-supported auditing that can meet the goals of the proposed legislations. The first contribution of our work is to enumerate these challenges and the limitations of existing auditing methods to implement these policies at scale. Second, we suggest that limited, privileged access to relevance estimators is the key to enabling generalizable platform-supported auditing of social media platforms by external researchers. Third, we show platform-supported auditing need not risk user privacy nor disclosure of platforms’ business interests by proposing an auditing framework that protects against these risks. For a particular fairness metric, we show that ensuring privacy imposes only a small constant factor increase (6.34× as an upper bound, and 4× for typical parameters) in the number of samples required for accurate auditing. Our technical contributions, combined with ongoing legal and policy efforts, can enable public oversight into how social media platforms affect individuals and society by moving past the privacy-vs-transparency hurdle.

This technical report is a joint work of Basileal Imana from USC, Aleksandra Korolova from Princeton University, and John Heidemann from USC/ISI.